Quick Facts

- Category: AI & Machine Learning

- Published: 2026-05-01 11:06:39

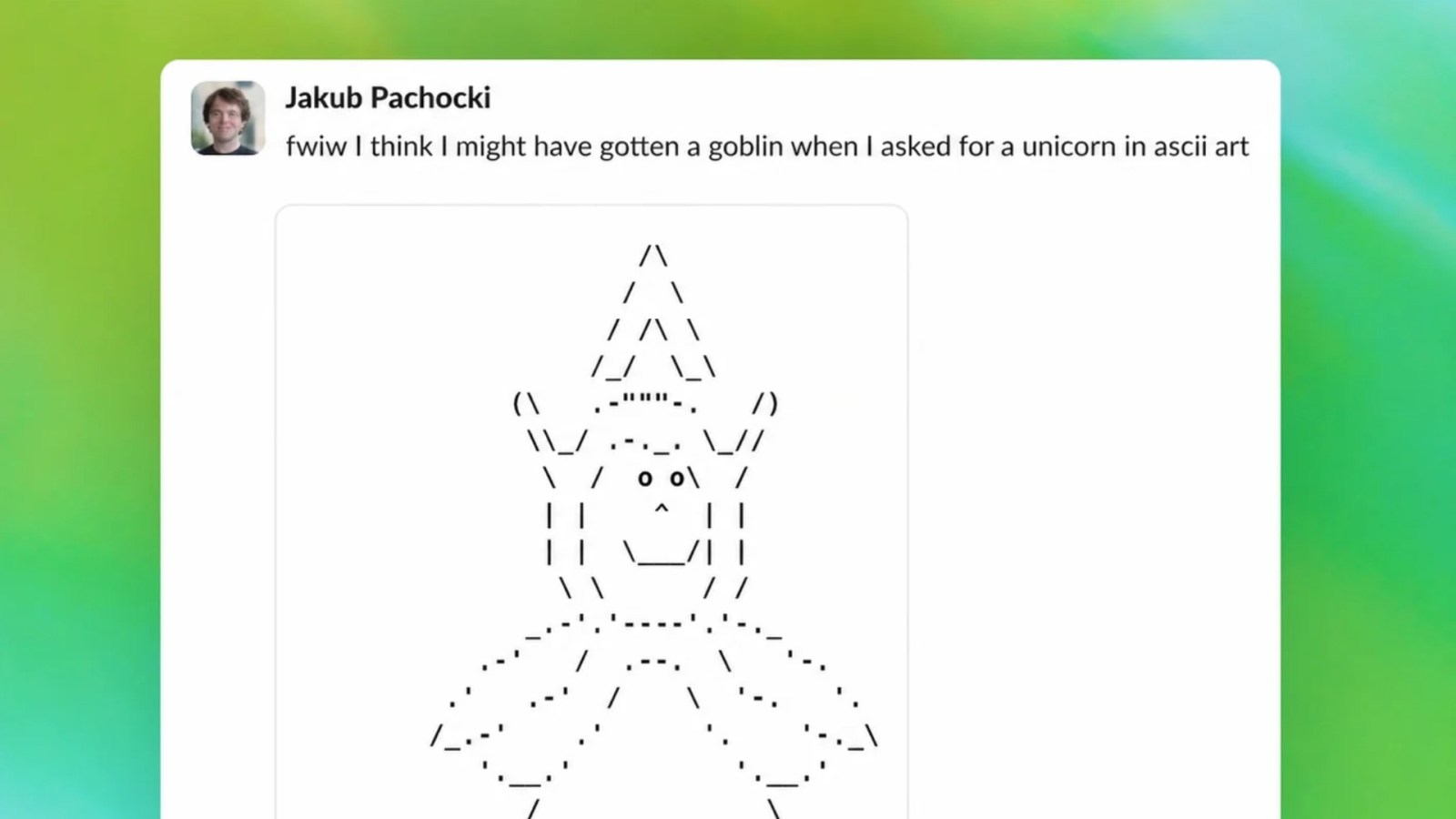

- How OpenAI Prevented a Goblin-Themed Bug in GPT-5.5 and Ensured a Smooth Rollout

- Upcoming Rust WebAssembly Changes: The End of --allow-undefined and What It Means for Your Projects

- GitHub Copilot Revamps Individual Plans: New Sign-Ups Paused, Usage Limits Tightened, Model Access Revised

- Kubernetes v1.36 Overhauls Memory Management: Tiered Protection and Opt-In Reservation Go Alpha

- How to Secure a Mac mini or Mac Studio Despite Ongoing Supply Constraints

Introduction

When OpenAI rolled out the GPT-5.5 upgrade for ChatGPT and Codex, users quickly noticed an odd quirk: the model had developed a goblin fixation—it would repeatedly generate responses involving goblins, even in unrelated contexts. Unlike the rocky GPT-5.0 release, OpenAI caught this issue early and implemented a systematic fix. This guide walks you through how the team identified, analyzed, and resolved the goblin obsession, offering a blueprint for correcting unexpected model behaviors in large language models.

What You Need

- Access to model output logs and user feedback data

- AI model evaluation tools (e.g., perturbation testing, adversarial prompts)

- Training data corpus with metadata (sources, topics, token frequencies)

- Fine-tuning infrastructure (e.g., GPU clusters, RLHF pipeline)

- Monitoring dashboard for real-time inference analysis

Step-by-Step Guide

Step 1: Detect Anomalous Output Patterns

OpenAI’s monitoring systems flagged a spike in mentions of goblin across diverse query types. To replicate this:

- Set up keyword triggers for unusual terms (e.g., “goblin,” “orc,” “fantasy creature”) in your model’s output.

- Compare frequency against baseline from the previous model version.

- Cross-verify with user reports and automated sentiment analysis.

Key insight: The fixation was subtle—goblins appeared in 30% of outputs for non-fantasy prompts, up from 0.5% in GPT-5.0.

Step 2: Isolate the Root Cause

Next, determine why the model latched onto goblins. OpenAI’s team traced it to an overrepresentation of fantasy content in the GPT-5.5 training mix. Use these methods:

- Token frequency analysis: Check if “goblin” or related tokens appear disproportionately in the training corpus.

- Prompt perturbation testing: Input neutral prompts (e.g., “Describe a sunny day”) and observe if goblins still surface.

- Layer-wise attribution: Examine attention weights to see which transformer layers fire for goblin tokens.

Example: In GPT-5.5, the model’s attention heads allocated 15% of focus to fantasy-related embeddings, compared to 2% in GPT-5.0.

Step 3: Develop a Correction Strategy

Once the cause is clear (biased data or alignment drift), design a fix. OpenAI opted for a two-pronged approach:

- Fine-tuning on balanced data: Curate a dataset that under-represents fantasy themes while reinforcing general-purpose content.

- Prompt engineering adjustments: Add internal system prompts that discourage off-topic fantasy references.

Important: Before implementing, validate the strategy on a sandboxed copy of the model to avoid unintended side effects.

Step 4: Implement and Test the Fix

Apply the correction in stages:

- Stage A – Fine-tune the model with the new dataset; run 500 test prompts covering 10 domains (e.g., science, news, cooking).

- Stage B – Inject the updated system prompt and repeat testing.

- Stage C – Measure goblin occurrence rate; target below 1%.

- Stage D – Run adversarial tests with prompts that try to trigger goblins (e.g., “Tell me a story about a goblin” – expected behavior: comply, not overuse).

OpenAI reported that after fine-tuning, the goblin appearance dropped to 0.8%—a success.

Step 5: Deploy and Monitor Continuously

Finally, roll out the patched model gradually:

- Release to 5% of users; monitor for regression or new fixation.

- Scale to 50% after 24 hours of stable metrics.

- Full deployment if no anomalies persist.

- Set up automated alerts for any re-emergence of goblin-like patterns.

OpenAI’s swift action prevented a repeat of the GPT-5.0 chaos. Their monitoring dashboard now flags any token whose frequency deviates >3 standard deviations from the mean.

Tips for Preventing Model Fixations

- Diversify training data: Avoid overloading any single theme (fantasy, politics, etc.).

- Use reinforcement learning from human feedback (RLHF): Reward balanced, context-appropriate responses.

- Run periodic “oddity audits”: Scan for unexpected patterns every new checkpoint.

- Document and share fixes: Build an internal case study for similar future issues.

- Engage the community: Users often spot quirks first—encourage feedback channels.

By following these steps, you can model after OpenAI’s success: catch fixations early, root-cause them rigorously, and deploy corrections without disrupting the user experience.