8 Game-Changing Insights into NVIDIA Spectrum-X and MRC for Gigascale AI

In the relentless pursuit of building the world’s most powerful AI factories, networking has become the silent bottleneck—or the secret weapon. NVIDIA’s Spectrum-X Ethernet fabric, now supercharged with Multipath Reliable Connection (MRC), is rewriting the rules for large-scale AI infrastructure. This isn’t just another network upgrade; it’s a fundamental shift in how data moves across clusters of thousands of GPUs. Here are eight critical things you need to know about the technology that’s powering OpenAI, Microsoft, and Oracle’s biggest AI ambitions.

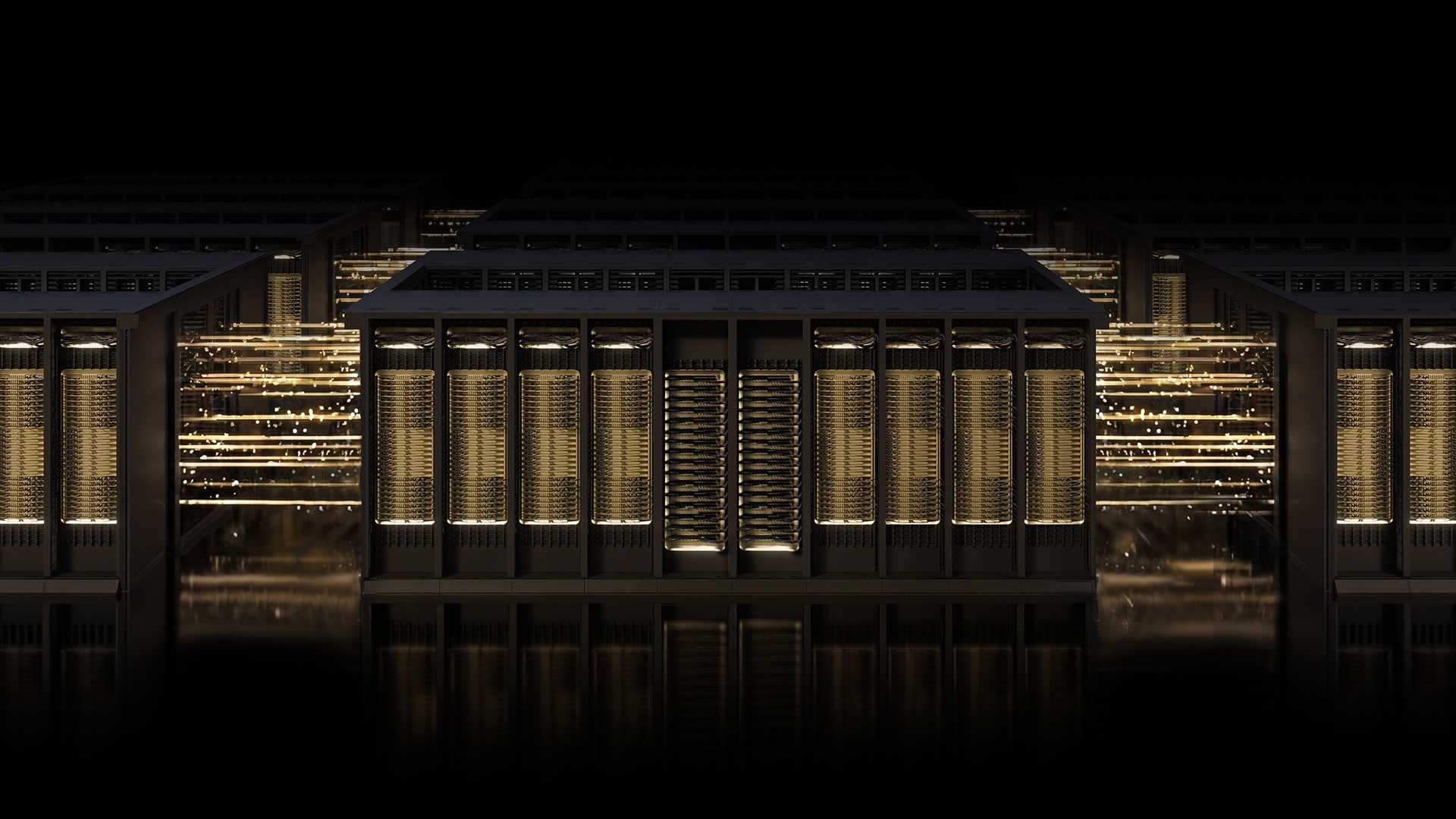

1. Spectrum-X: The AI-Native Ethernet Foundation

NVIDIA Spectrum-X is not your standard Ethernet switch. It’s a purpose-built, open networking platform designed from the ground up for AI workloads. Unlike general-purpose networks that struggle with the bursty, all-to-all communication patterns of large language model training, Spectrum-X integrates hardware acceleration, deep telemetry, and intelligent fabric control. Think of it as a superhighway system engineered specifically for the data demands of AI—where every nanosecond of latency and every bit of bandwidth matters. Deployed by industry titans like OpenAI, Microsoft, and Oracle, Spectrum-X provides the rock-solid foundation needed to train and deploy frontier models at gigascale.

2. MRC: From Single-Lane Road to Smart Street Grid

Multipath Reliable Connection (MRC) is an RDMA transport protocol that fundamentally changes how data flows across an AI fabric. Traditional single-path connections are like a single-lane road: if there’s a blockage, everything stops. MRC replaces that with a cleverly laid-out street grid system, paired with a real-time traffic app. It distributes a single RDMA connection across multiple physical network paths, automatically rerouting around jams and road closures. This means higher throughput, better load balancing, and dramatically improved availability for large-scale training runs. It’s the difference between a traffic nightmare and a smooth commute for your data.

3. Proven in Production with OpenAI, Microsoft, and Oracle

MRC isn’t a theoretical concept—it’s battle-tested in the world’s largest AI factories. OpenAI, Microsoft, and NVIDIA collaborated to bring MRC to life in the Blackwell generation. Sachin Katti, head of industrial compute at OpenAI, noted that MRC’s end-to-end approach “enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.” Microsoft’s Fairwater and Oracle Cloud Infrastructure’s Abilene data centers—two of the most massive AI factories for training frontier LLMs—rely on MRC to hit their performance, scale, and efficiency targets. These deployments prove MRC works where it counts.

4. Open Through the Open Compute Project

NVIDIA has released MRC as an open specification through the Open Compute Project (OCP). This move democratizes the technology, allowing the entire industry to adopt and innovate on top of it. By opening the protocol, NVIDIA isn’t just selling hardware; it’s contributing to a standard that can accelerate AI networking for everyone. The Spectrum-X platform’s combination of purpose-built hardware, deep telemetry, and intelligent fabric control makes it the ideal environment to incubate and deploy such open protocols. MRC’s journey from concept to gigascale AI production is a testament to what’s possible when ecosystem collaboration meets robust engineering.

5. Maximum GPU Utilization Through Dynamic Load Balancing

One of MRC’s superpowers is its ability to keep every GPU fed with data. In large-scale training, idle GPUs are wasted money and time. MRC load-balances traffic across all available network paths, ensuring each GPU gets the bandwidth it needs throughout the entire training run. Even when congestion hits—and it always does at scale—MRC dynamically avoids overloaded paths in real time. The result is sustained high bandwidth and consistently high GPU utilization, directly translating to faster training times and lower costs. This is critical for frontier models that can take weeks or months to train.

6. Intelligent Retransmission Minimizes Downtime

Data loss is inevitable in any large network, but how you handle it matters. MRC features intelligent retransmission that enables rapid, precise recovery from packet loss. When a short-lived interruption occurs, the protocol quickly retransmits only the lost data, avoiding the overhead of full re-transmissions. This minimizes the impact on long-running jobs, preventing GPU idle time and keeping training pipelines flowing. In a world where a single job might run for weeks, eliminating minutes of idle time can save millions of dollars and accelerate time to insight.

7. Fine-Grained Visibility and Control for Administrators

Operating a gigascale AI fabric is no small feat. Administrators need to see exactly what’s happening in the network and quickly fix issues. MRC provides fine-grained visibility into traffic paths, allowing operators to monitor load distribution, detect bottlenecks, and troubleshoot problems with surgical precision. This level of control simplifies day-to-day operations and accelerates root cause analysis when performance degrades. Combined with Spectrum-X’s deep telemetry, administrators have a dashboard that turns network complexity into actionable insights.

8. The Future of AI Networking Is Open and Multipath

NVIDIA Spectrum-X with MRC sets a new standard for AI networking—one that is open, AI-native, and built for gigascale. As AI models continue to grow in size and complexity, the network must evolve to avoid becoming the bottleneck. MRC’s multipath approach, combined with spectrum-X’s purpose-built hardware, provides a blueprint for the future. With industry leaders already adopting and contributing to this ecosystem, it’s clear that open, intelligent, and resilient networking is the path forward for anyone serious about building the next generation of AI factories.

The race to build the most powerful AI factories is far from over, but with Spectrum-X and MRC, NVIDIA has provided a networking foundation that can keep pace with the ambitions of AI itself. Whether you’re deploying at OpenAI scale or building your own cluster, these eight insights highlight why this technology matters now more than ever.